The efficiency gains from AI in recruitment are real and measurable: faster screening, larger candidate pools, more structured assessment. But efficiency without governance produces systematic discrimination at scale. As HR leaders navigate the adoption of AI hiring tools, the question is no longer whether to use these systems. The question is how to use them responsibly.

Ethical AI hiring is not a compliance checkbox. It is the discipline of ensuring that automated systems designed to identify qualified talent do not instead encode historical biases, create legally prohibited disparate outcomes, or erode the trust of candidates your organization needs to attract. With the EU AI Act now creating binding obligations for organizations using AI in employment decisions, the business case for getting this right has become simultaneously more urgent and more consequential.

What is Ethical AI Hiring?

Ethical AI hiring is the practice of deploying artificial intelligence tools in talent acquisition processes in a manner that is fair, transparent, legally compliant, and subject to meaningful human oversight. It encompasses the selection and due diligence of AI vendors, the design of processes in which AI operates, the governance structures that monitor system performance, and the candidate experience throughout.

An ethical AI hiring framework addresses three distinct dimensions: preventing discriminatory outcomes (fairness), enabling informed oversight and candidate understanding (transparency), and establishing clear accountability when the system underperforms or causes harm (governance).

Why Ethical AI in Hiring Matters

The failure modes are not hypothetical. Amazon's internal recruitment AI, developed and abandoned in 2018, systematically downgraded resumes containing the word "women's" because it was trained on a decade of applications to a company that had historically hired predominantly male engineers. The AI learned to replicate the bias, not correct it.

More recent independent audits of commercial hiring tools have found measurable disparate impact across gender and ethnic groups in approximately 30–40% of tested systems. When AI screening processes hundreds of thousands of applications across an enterprise, a 5% disparity in callback rates translates to thousands of candidates treated unequally.

Beyond the human cost, three business imperatives make ethical AI hiring strategically significant:

Legal exposure: EU AI Act violations carry penalties up to €30 million or 6% of global annual turnover. Employment discrimination claims are amplified, not diminished, when algorithms replace human discretion

Talent pool quality: Biased screening tools systematically exclude qualified candidates. The company suffers a competitive disadvantage in talent acquisition

Employer brand: Candidates who experience opaque, unexplained AI screening report significantly lower satisfaction with the recruitment process, even when the outcome is positive. Reputation in talent markets is increasingly shaped by these experiences

30–40% of tested AI hiring tools show measurable algorithmic bias.

Is yours one of them?

— AI Now Institute Research

How to Implement Ethical AI Hiring: A Five-Step Framework

Step 1: Classify Your AI Tools Under the EU AI Act

The EU AI Act (Regulation 2024/1689) classifies AI systems used in employment decisions as high-risk AI systems under Annex III. This classification applies to tools that automate or substantially influence decisions about:

Recruitment and selection

Performance evaluation

Promotion decisions

Contract termination

If your organization operates in the EU or processes applications from EU residents, this classification is not optional. The first step is an inventory of every AI tool in your talent acquisition stack—including features within your ATS that may use ML for ranking, scheduling, or matching and confirming which trigger high-risk classification.

Step 2: Conduct Vendor Due Diligence on Bias and Compliance

Legal responsibility for discriminatory AI outcomes does not transfer to vendors. Your organization remains liable under EU employment discrimination law and the EU AI Act even when using third-party tools. This requires vendor due diligence that goes beyond standard IT security assessment:

Questions to ask every AI hiring vendor:

Has the system undergone an independent bias audit? By whom, and what were the results?

What demographic groups were represented in the training data?

Does the system produce or contribute to automated rejection decisions? If so, what is the human review pathway?

Can you provide technical documentation meeting EU AI Act conformity assessment standards?

How are candidates notified that AI is used in their evaluation?

Vendors who cannot answer these questions clearly should be treated as high-risk procurement decisions regardless of other product quality.

Step 3: Design Human Oversight Into Every AI Decision Point

The EU AI Act requires that high-risk AI systems be designed so humans can "effectively oversee" and "intervene in and override" automated outputs. In practice, this means AI in hiring should function as decision support, not decision replacement.

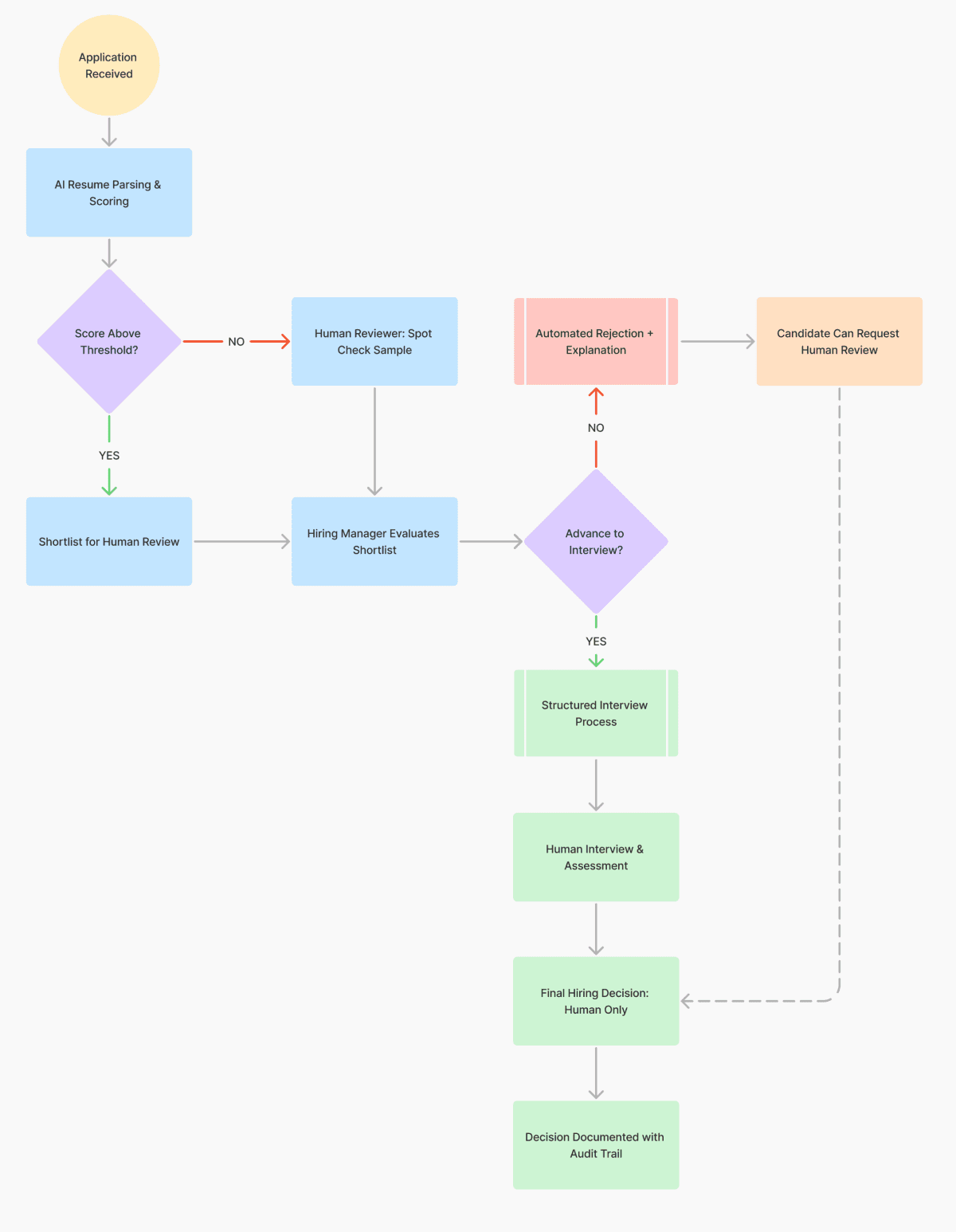

The AI hiring decision flow below illustrates how to structure human oversight at each stage:

Key design principles from this flow:

No fully automated rejection without human review pathway

Spot-check processes for candidates below AI thresholds (catches systematic exclusions)

Final hiring decisions are always human-made and documented

Rejected candidates receive meaningful explanation and appeal option

Step 4: Implement Candidate Transparency

Transparency in AI hiring operates at two levels: what candidates are told, and what they can access.

Proactive disclosure (required):

Job postings and application processes must inform candidates that AI tools are used in screening or assessment.

Candidates must understand what data is collected and how it influences decisions.

Reactive disclosure (required on request):

Candidates may request an explanation of the basis for AI-influenced decisions under both GDPR Article 22 and EU AI Act Article 13

"The algorithm ranked you lower" is not an adequate explanation. The response must identify the principal factors that influenced the outcome in terms candidates can understand

Human review option (required for high-risk applications):

Candidates subject to AI-assisted adverse decisions must have a clear, accessible mechanism to request human review.

This pathway must be real, routing requests back to the same automated system is not compliant.

Step 5: Establish Ongoing Bias Monitoring

Ethical AI hiring is not a deployment-time certification; it is an ongoing management responsibility. Bias can emerge or intensify over time as hiring patterns shift and AI systems are retrained on new data.

Minimum monitoring program for organizations using high-risk hiring AI:

Quarterly adverse impact analysis across gender, ethnicity, and age cohorts

Annual independent bias audit of primary screening tools

Incident documentation and remediation process when bias is detected

Board or executive-level reporting on AI hiring system performance

Common Ethical AI Hiring Mistakes to Avoid

Mistake 1: Assuming vendor compliance transfers to you

Your vendor's compliance documentation covers their obligations as a provider. Your conformity assessment, usage documentation, and ongoing monitoring obligations are separate and your responsibility.

Mistake 2: Treating transparency as a legal technicality

Organizations that treat candidate transparency as a disclosure checkbox miss the point and the opportunity. Candidates who understand how AI is used in their evaluation, even imperfectly, report higher satisfaction with the recruitment process. Transparency is a candidate experience investment, not just a compliance cost.

Mistake 3: Deploying AI tools without bias baseline data

You cannot manage what you have not measured. Before deploying or expanding use of any AI hiring tool, establish demographic data on your current hiring funnel. You need a baseline to detect bias and to defend against discrimination claims if they arise.

Mistake 4: Ignoring the feedback loop risk

AI systems trained on your historical hiring data will learn to replicate your historical biases. If your past hiring skewed toward certain universities, tenure backgrounds, or credential patterns that correlate with demographics, you are not just replicating human bias, you are encoding it into a scalable automated system.

Ethical AI Hiring Best Practices

Based on research from the EU AI Act framework, bias audit methodologies, and leading-practice organizations:

Build an AI hiring governance committee – including HR, Legal, IT, and ideally an external ethics advisor—with clear authority to pause or override AI tool deployment

Audit before you deploy, not after a complaint – independent bias testing before full rollout catches problems at minimal cost compared to discovery after scale deployment

Use structured assessments rather than unstructured data – AI screening of standardized assessments (skills tests, structured video interview questions) produces more defensible, less biased outputs than screening of unstructured resume text

Invest in explainable models – prioritize AI tools where the factors influencing candidate scoring can be stated in human terms, not just those that promise the highest accuracy

Document everything – EU AI Act conformity requires documentation. More practically, documentation is your organization's defense when a candidate challenges a screening outcome

Frequently Asked Questions

Yes, with some proportionality in implementation requirements. The EU AI Act applies to any organization that deploys high-risk AI in employment decisions affecting EU residents, regardless of company size. SMEs benefit from reduced documentation burden compared to large enterprises, but the core obligations—transparency, human oversight, bias monitoring—apply. Notably, if you are using a third-party ATS with AI features, the vendor's compliance documentation helps, but your own usage documentation and monitoring obligations remain.

The trigger is the use of AI to substantially influence employment decisions: hiring, promotion, performance evaluation, or termination. If your ATS uses machine learning to score, rank, or filter candidates without human review of the underlying logic, it likely qualifies. Review your vendor's documentation and consult legal counsel with EU AI Act expertise to confirm classification. When in doubt, apply high-risk compliance requirements, the cost of over-compliance is documentation; the cost of under-compliance is up to €30M in penalties.

Immediate steps: suspend the specific feature or decision pathway where bias is detected; document the incident; notify the appropriate supervisory authority if the bias has already affected real candidates at scale; conduct root cause analysis on training data and model parameters; and implement remediation before redeployment. Under EU AI Act Article 73, serious malfunctions, including significant bias incidents, must be reported to national supervisory authorities.

Under EU AI Act provisions for high-risk AI, fully automated final employment decisions without human involvement require the candidate to be explicitly notified of their right to request human review, and that request must be genuinely honored. In practice, most legal counsel advises that final hiring decisions should be made by a human who has reviewed AI recommendations, not delegated entirely to AI output, both as a compliance safeguard and as an ethical practice.

Yes, absolutely. The component is built using native Framer tools, so you can tweak fonts, colors, spacing, animations, and layout however you like.

The explanation must be meaningful, describing the principal factors that influenced the scoring in terms the candidate can understand and potentially act on. "You did not meet the minimum score threshold" is insufficient. "Your application scored lower on the required technical skills section and did not demonstrate the 5 years of enterprise software experience listed as required" is the standard to meet. Build this explanation capability into your process before deployment, not as a reactive crisis response.

Conclusion

Ethical AI hiring is not in tension with efficient, data-driven talent acquisition. Done well, it produces both: AI tools that accurately identify qualified candidates from large pools, with governance that ensures accuracy is measured against the right objectives (skills, competencies, and job-relevant potential) rather than proxies that correlate with demographic characteristics.

The organizations that implement ethical AI hiring frameworks now will be better positioned as enforcement of the EU AI Act intensifies and as candidates increasingly expect, and reward, transparency from employers. The legal exposure from non-compliance is significant. The competitive advantage from getting this right is substantial.

Start with your vendor audit.

Build your governance committee.

Establish your bias baseline.

The framework exists; the regulation is clear; the tools to comply responsibly are available.

What remains is organizational will.

External Links

EU AI Act Official Text - regulation text for reference

EEOC Guidance on Employment Algorithms - US regulatory comparison